The $380 Billion Paradox: Why the Pentagon Is Threatening the World’s Fastest-Growing AI Company

The most honest sentence in the entire AI industry right now is one nobody wants to say out loud.

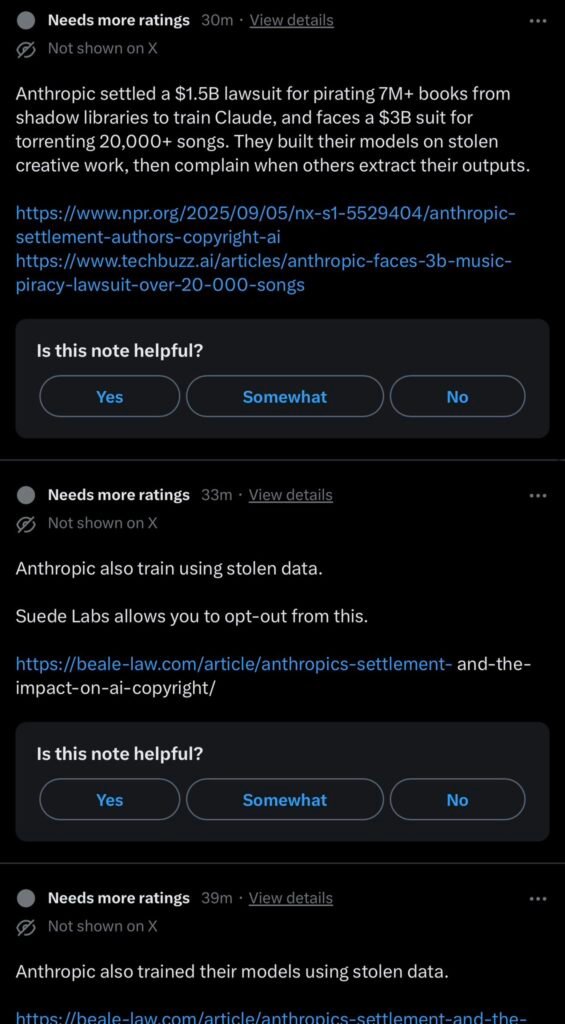

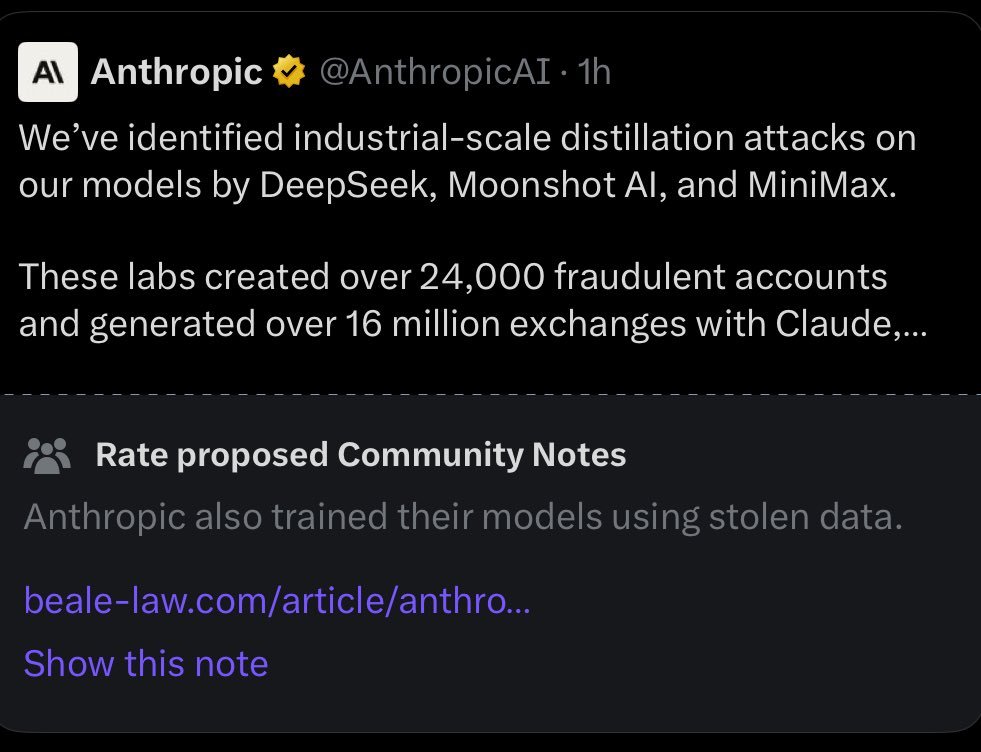

It was spoken this week by Elon Musk, a competitor, and it was framed as an accusation: “Anthropic is guilty of stealing training data at massive scale and has had to pay multi-billion dollar settlements for their theft. This is just a fact.”

Musk is correct. The $1.5 billion settlement over 7 million pirated books used to train Claude is real. It is documented. But framing this as an Anthropic problem rather than an industry-wide structural reality is competitive positioning disguised as moral outrage.

Every major foundation model was trained on data its creators did not have explicit permission to use. Every single one. OpenAI faces ongoing lawsuits from authors, newspapers, and code repositories. Google trained on the entire indexed internet. Meta used Libraries Genesis datasets. And xAI’s Grok was trained on the full corpus of X posts, a decision Musk made unilaterally as the platform’s owner.

The structural reality is that the entire foundation model industry sits on an unresolved intellectual property question worth hundreds of billions of dollars. Every lab trained on data it did not license. Anthropic’s $1.5 billion payment is not a punishment; it is a retroactive licensing fee paid under legal pressure. Every lab’s legal strategy is to get big enough that such settlements become a cost of doing business rather than an existential threat.

The reason Musk is raising this now has nothing to do with ethics. Anthropic is in conversations with the Pentagon. xAI is competing for the same contracts. Framing your competitor as a data thief three days before a defense meeting is not moral clarity. It is positioning.

And the deepest irony? The United States wants to restrict Chinese access to American AI models on intellectual property grounds. But every American AI model was built on intellectual property its creators took without permission from millions of authors, coders, artists, and publishers. The entire moral framework for the technology export control regime rests on an argument that the American labs themselves have not resolved domestically.

That is not hypocrisy anyone in the industry wants to discuss. The moment you acknowledge it, the legal and regulatory exposure scales to every company simultaneously.

I. The Pentagon Is About to Give an American AI Company the Huawei Treatment

This morning, Defense Secretary Pete Hegseth summoned Anthropic CEO Dario Amodei to the Pentagon. A senior Defense official told Axios: “This is not a friendly meeting. This is a sht-or-get-off-the-pot meeting.”*

Here’s what’s actually happening:

Claude is the only AI model running inside the Pentagon’s classified systems. It is the most capable model for sensitive defense and intelligence work. It was used in the Maduro raid in January through Palantir, the first confirmed use of a commercial AI in a classified military operation.

Now the Pentagon wants all restrictions removed. “All lawful purposes.” Including capabilities that would let the military continuously monitor the social media posts, voter registration, concealed carry permits, and demonstration records of every American citizen using AI at scale.

Anthropic said no to two things: mass surveillance of Americans and fully autonomous weaponry.

The Pentagon’s response: threatening to designate Anthropic a “supply chain risk.”

That designation is reserved for foreign adversaries. The last company to receive it was Huawei. It would force every defense contractor in America to certify they don’t use Claude in their workflows. Given that 8 of the Fortune 10 use Claude, this would cascade through the entire defense industrial base.

A senior Pentagon official told Axios: “It will be an enormous pain in the ass to disentangle, and we are going to make sure they pay a price for forcing our hand like this.”

Another official: “The problem with Dario is, with him, it’s ideological. We know who we’re dealing with.”

Meanwhile: OpenAI, Google, and xAI have already agreed to remove their safeguards for military use. OpenAI deployed ChatGPT to all 3 million DoD personnel through GenAI.mil. xAI holds a separate $200M contract backed by Musk’s political proximity to the administration.

Anthropic is the only one that said no.

Think about what’s being asked. The company whose own safety chief resigned two weeks ago warning “the world is in peril.” The company that just published a report showing its most advanced model “knowingly assisted with chemical weapons research” in testing. That company is being punished for refusing to hand the U.S. military unrestricted access to that same technology.

The Pentagon admits competing models “are just behind” for classified work. They need Claude. But they’re willing to blow up the relationship rather than accept two restrictions that protect American citizens from their own government.

This is the most important story in AI right now. It’s not about one $200M contract. It’s about whether the U.S. military can compel a private company to remove safety restrictions on technology its own developers have demonstrated is dangerous, under threat of receiving the same designation as a Chinese national security threat.

Dario Amodei walks into that meeting with $380 billion in enterprise value, $14 billion in revenue, and a principle that may cost him both.

II. The Fastest Company in History Is Running Out of Road

Every institutional investor with a technology allocation has the same number circled on their whiteboard this morning: twenty-seven.

That is the ratio of Anthropic’s enterprise value to its annualized revenue after Anthropic closed a $30 billion Series G round on February 12, 2026, at a $380 billion post-money valuation. Twenty-seven times revenue for a company that did not exist four years ago. Twenty-seven times revenue for a company whose chief executive told Fortune, days after banking the largest private funding round in history, that a twelve-month delay in artificial intelligence progress would make him bankrupt.

The number deserves to sit on that whiteboard because it encodes a bet most allocators have not fully priced. At 27x, investors are not purchasing a stake in an enterprise software company. They are purchasing a derivative on the exact arrival date of what Dario Amodei calls “a country of geniuses in a datacenter.”

The revenue beneath the multiple is real. Anthropic grew from $1 billion in annualized revenue in December 2024 to $4 billion by July 2025, $9 billion by December 2025, and $14 billion by the second week of February 2026, a trajectory that represents 10x annual growth sustained for three consecutive years. No enterprise technology company in recorded history has compounded at this rate at this scale.

Claude Code, Anthropic’s agentic coding product, went from zero revenue to more than $2.5 billion in annualized billings in approximately nine months. More than 500 customers now spend over $1 million annually, up from roughly a dozen two years ago. Eight of the ten largest companies on the Fortune 10 list are Claude customers.

This is not a question of whether Anthropic has built something extraordinary. It has. The question confronting every allocator evaluating this name is whether six structural fractures, each independently capable of derailing the thesis, are adequately compensated by a multiple that requires near-perfect execution through 2028.

III. The Six Fractures

- The Margin Bridge That Does Not Exist

The consensus narrative treats the revenue number as the story and the margin structure as a detail that will resolve itself. This is backwards. Revenue at 40% gross margins and revenue at 77% gross margins are different businesses entirely. The distance between where Anthropic sits today and where it needs to arrive by 2028 represents one of the most aggressive margin expansion assumptions ever embedded in a private technology valuation. - The Customer Concentration Paradox

Anthropic’s fastest-growing product, Claude Code, is rapidly becoming a substitute for the very enterprise software engineers who are its largest customers. The better the product gets, the more it threatens the labor costs of the companies paying for it. This is not a sustainable equilibrium. - The Distribution Walls

Three distribution channels closed simultaneously in January 2026. The terms of access are tightening. The cost of customer acquisition is rising faster than revenue.

4. The $4.5 Billion Legal Minefield

Beyond the $1.5 billion settlement, there are unresolved claims. Some name the founder personally. The intellectual property question is not settled; it is merely delayed.

- The Pentagon Standoff

The safety brand is now a revenue constraint. Refusing the Pentagon is principled. It is also expensive. If the “supply chain risk” designation moves forward, the defense industrial base will be forced to choose between Claude and compliance. - Competitive Convergence

Six well-capitalized competitors are converging on capability parity. Inference costs are declining by an order of magnitude annually. The technical moat on which the valuation thesis depends is collapsing.

IV. The Actual Mechanism Nobody Is Mapping

Let us return to Musk’s accusation.

Anthropic accused Chinese labs of distilling Claude through its public API. Musk responded by pointing out Anthropic trained on stolen data. Gergely Orosz, a respected engineer, wrote “Anthropic can’t have it both ways.”

All three are correct simultaneously. And all three are being selectively honest.

The structural reality is that the entire industry sits on unresolved questions. Every lab trained on data it did not license. Every lab knows this. And the actual creators whose work built every one of these models are watching billionaires argue about who stole from them more ethically.

The Pentagon is now forcing a resolution to a different question: whether a private company can refuse the military’s demand for unrestricted access to technology its own safety reports describe as dangerous.

Dario Amodei walks into that meeting with $380 billion in valuation and a principle.

The market is about to learn which one matters more.